Imagine this classroom demonstration of liquefaction: The instructor opens a container of yogurt, turns it upside down and places the contents onto a plate. The yogurt “cone” retains the shape of its container, even when the instructor sets an apple on it. Then the instructor jiggles the plate rapidly side-to-side. The “yogurt cone” begins to collapse, and the apple slowly sinks into a yogurt puddle.

Voila. That is liquefaction.

ABOUT THE AUTHOR

Dr. John Stamatakos is a geologist and geophysicist with international experience in structural geology, earthquake seismology, tectonics, neotectonics and exploration geophysics. Stamatakos is the technical programs director for SwRI’s Center for Nuclear Waste Regulatory Analyses. He supports geological and geophysical evaluations of seismic and volcanic hazards at critical nuclear facilities.

Liquefaction occurs when loose soil, saturated with water, is shaken by an earthquake, causing the soil to behave like a liquid.

At the macro-scale, earthquakes can similarly liquefy solid ground in places where sandy, water-saturated layers lie beneath seemingly solid terrain. The stakes for recognizing and mitigating the potential for seismic-induced liquefaction are high. It was responsible for much of the damage and loss of life from the Niigata and Great Alaska earthquakes in 1964. It was a major contributor to the destruction in San Francisco’s Marina District during the 1989 Loma Prieta earthquake. Liquefaction during a 2010-11 sequence of earthquakes in Christchurch, New Zealand, destroyed 15,000 singlefamily homes and severely damaged hundreds of buildings in that city’s central business district.

When the Loma Prieta earthquake hit, the third game of the 1989 World Series matchup between the San Francisco Giants and Oakland Athletics was about to begin. Because both Major League Baseball teams were based in the affected area, many people left work early or stayed late to participate in viewing parties. Traffic on the normally crowded freeways was unusually light. During the earthquake, liquefaction contributed to the collapse of Oakland’s Cypress Freeway across the bay, which killed 42 people, a number that likely would have been significantly higher if not for the game.

The collapse of the two-level Cypress Freeway was the most lethal event associated with the 1989 Loma Prieta earthquake. Reclaimed marshland amplified the shaking and soil liquefaction occurred.

This USGS-based map illustrates expected levels of shaking from future earthquakes in the San Francisco Bay area. The most severe tremors generally follow the active faults; however, their intensity is also influenced by the type of materials underlying an area. Soft sediments tend to amplify and prolong shaking.

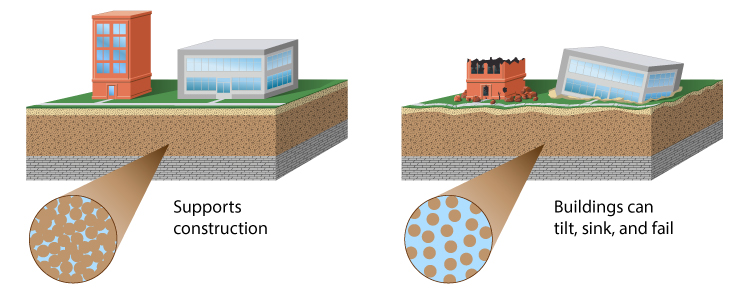

Normally, layers of soil remain separated and the surface is strong enough to support even large-scale construction projects. But under an earthquake’s tremors, strength and separation quickly disappear. In some cases, the ground may simply liquefy, swallowing cars, streets and buildings. In others, buried sewers can float to the surface, or watery mud can boil up from the ground, far from any stream or shore. Even earthquake-resistant buildings can tilt or tip over as the ground beneath their foundations gives way.

How is this possible? Liquefaction requires three factors: The first is soil composed of loose, granular sediment — often “made” land, such as when marshland is filled with material to create solid ground. Second, the sediment is saturated by groundwater, filling the spaces between the grains of sand and silt. The final factor is strong shaking. Liquefaction occurs when these sodden sediments experience powerful tremors. Liquefaction can devastate large regions causing widespread damage, as seen when the 1989 Loma Prieta earthquake shook the San Francisco region.

Middle: Liquefaction contributed to damage from the 1906 San Francisco earthquake, as evidenced by this tilted Victorian home.

Right: During the 1964 Niigata earthquake in Japan, liquefaction caused large areas to subside by up to 4 feet. Apartment complexes built on reclaimed land near the Shinona River sank, tilted and, in one case, completely tipped over.

Next-Generation Liquefaction Consortium

Achieving all objectives, however, will require a much larger effort than can be supported by a single client organization. With substantial experience in organizing, managing and successfully completing projects of this scope and scale, SwRI is working with PEER to launch the Next-Generation Liquefaction (NGL) consortium to that end. Potential members include U.S. and international clients that construct, manage or regulate critical infrastructure that could be susceptible to liquefaction.

DETAIL

Sand volcanos form when liquefaction or other processes eject sand onto surfaces from a central point. The sand builds up into volcano-shaped cones.

The NGL consortium will advance the state of the art in liquefaction research and provide end users with a consensus approach to assessing liquefaction potential. The consortium’s goal is to collect data using rigorous standards. Based on this database, scientists will create probabilistic models that provide hazard- and risk-consistent bases for assessing liquefaction susceptibility, its potential for being triggered in susceptible soils, and its likely impact. Multiple models for each step in the framework are likely to be developed, and because they will have been derived from a common database, differences in model predictions will reflect genuine, “apples-to-apples” uncertainties associated with different modeling approaches. Most importantly, the consortium is committed to an open and objective evaluation and integration of data, models and methods. The evaluation and integration of the data into new models and methods will be clear and transparent.

Following these principles will ensure that the resulting liquefaction triggering and consequence models are reliable, robust and vetted by the scientific community, providing a solid guide for designing, constructing and overseeing critical infrastructure projects.

Construction Concerns

Obviously, the potential for soil liquefaction is a major concern for engineers designing critical infrastructure such as dams, roads, pipelines and nuclear power plants in earthquake-prone areas. Over the years, scientists working separately have developed a number of different mathematical models to predict this potential. However, the models differ enough from each other that they may produce conflicting results: one may indicate that liquefaction will occur, and another that it will not.

New technical analysis of liquefaction events worldwide could help scientists to resolve existing models into a single, reliable new model that geotechnical engineers can use with confidence. The National Academy of Sciences issued an appeal to the geotechnical community to advance understanding of soil liquefaction and to develop improved models to predict its occurrence and effects. To that end, SwRI’s Center for Nuclear Waste Regulatory Analyses (CNWRA®) is working with the Pacific Earthquake Engineering Research (PEER) Center to create the Next-Generation Liquefaction Consortium. The consortium will develop a reliable, opensource liquefaction database along with consequence models that all engineers and scientists can use.

Liquefaction Potential

Accurate engineering assessments are essential to cost-effectively protect life and infrastructure and to mitigate adverse economic, environmental and societal impacts of liquefaction. Early efforts to understand liquefaction potential involved testing soil samples in laboratory experiments. Scientists used reconstituted soil specimens of loose, clean, saturated sands similar to those known to have liquefied during earthquakes. Although those tests provided valuable insights into the soils’ mechanical properties, scientists discovered that conditions and characteristics of reconstituted test specimens did not accurately represent those soils in nature. The applicability of lab results to field conditions has proven tenuous.

Geotechnical engineers then shifted their evaluations to in situ behavior of soils based on case histories of significant liquefaction events. Scientists compared sites where liquefaction occurred with sites with no observed ground failure. For those sites with ground failure, they also evaluated the magnitude and distribution of ground cracking, settlement and lateral spreading. To understand why the ground failed at some sites but not others, scientists considered groundwater table depth, severity of shaking and soil resistance. The severity of shaking considers both the amplitude and duration of shear stresses induced in the soil by earthquakes. Soil resistance is measured by driving a soil sampler or instrumented cone vertically into the soil at a controlled rate. Scientists plotted the parameter combinations to identify characteristics corresponding to liquefaction, or the lack thereof.

Unfortunately, this case-history-based research occurred primarily within the traditional academic framework. As such, individual subsets or small groups each assembled and interpreted their own subset of case history data to develop predictive liquefaction models. As a result, these individual models often derive different, even conflicting, results. Practicing engineers, construction companies, utilities, state agencies, insurance agencies and federal regulators must wrestle with those differences as they decide where and how to design, construct and protect critical infrastructure.

Left: Liquefaction associated with a 2016 earthquake in Christchurch, New Zealand, caused sand volcanos to erupt in the Avon-Heathcote Estuary.

Middle: When surrounded by liquefied soil, light structures buried underground can float to the surface. During the 2004 Chuetsu earthquake in Japan, liquefaction caused sidewalks to sink and manholes to rise.

Deterministic vs. Probabilistic Models

An added complexity is that many current liquefaction methods and models are largely deterministic, meaning they are based on specific inputs only and do not take uncertainty into account. In contrast, current approaches to risk analyses, decision making, regulation and design of critical infrastructure prefer performance-based, risk-informed probabilistic methods.

DETAIL

Mathematical models are often used as decision-making tools, processing data inputs to predict outcomes. A deterministic model typically yields a single solution. A probabilistic model provides a distribution of possible outcomes and indicates how likely each is to occur.

In 2013, PEER researchers brought together an international team to develop a new paradigm for evaluating liquefaction research and engineering while developing models that address liquefaction triggering and consequences. The PEER project identified three objectives to revamp and standardize liquefaction modeling technology. First, the industry should substantially improve the quality, transparency and accessibility of case history data related to ground failure. Second, the industry should provide a coordinated framework for supporting studies to augment case history data. And third, the industry should provide an open, collaborative process where developer teams have access to common resources and share ideas and results during model development. This open process should reduce the potential for mistakes and develop best practices.

In 2016, the National Academy of Sciences documented concerns about seismically induced liquefaction, describing the current state of engineering practice and highlighting the shortcomings of existing methods and models. Their report recommended that the country establish a curated, publicly accessible database of relevant liquefaction triggering and consequence case-history data. The database should include case histories of events where soils have interacted with buildings and other structures, documenting relevant field, laboratory and physical model data. The database should meet strict protocols for data quality.

The U.S. Nuclear Regulatory Commission (NRC) also recognized the uncertainty in current liquefaction methods and models, citing specific concerns on how to develop and implement reliable, robust, performance-based and risk-informed nuclear safety regulations. In 2016, NRC contracted with SwRI’s CNWRA to support the first of the three PEER project objectives: developing a liquefaction case-history database. Many key stakeholders and participants are collaborating on this task.

JOINING THE CONSORTIUM

The Next-Generation Liquefaction (NGL) Consortium will bring together various governmental and commercial entities that would benefit from improved predictive liquefaction models. These include state and federal regulators, insurance and re-insurance companies and highway and bridge authorities, as well as owners and operators of large dams, ports and other critical infrastructure. NGL will combine the engineering and scientific experience of PEER with CNWRA expertise in seismology and SwRI’s extensive experience in managing large consortia. Interested stakeholders can contact Dr. Kristin Ulmer at SwRI or Dr. Jonathan Stewart at UCLA.

Questions about this story or the Next-Generation Liquefaction (NGL) Consortium? Contact John Stamatakos at +1 301 881 0290.