Background

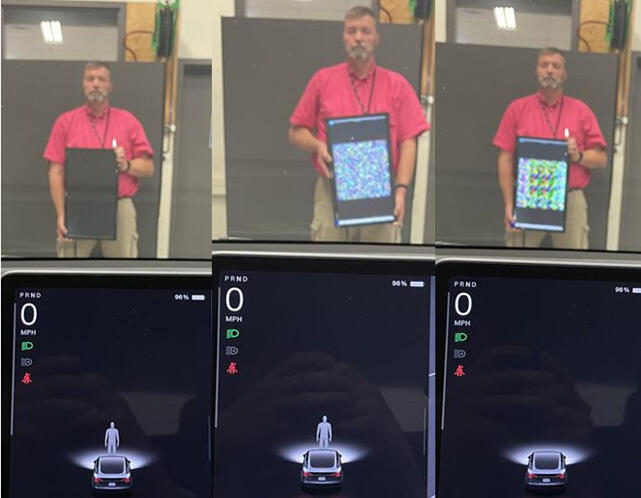

Figure 1: The leftmost image shows that a researcher has been detected as a pedestrian by the vehicle. The center image shows an image being generated by the neural network. In the rightmost image, the network has generated an image that caused the vehicle to fail to recognize a pedestrian.

Many vehicles use computer vision systems for safety and driver assistance; however, companies often do not disclose system details. This forces testers and safety authorities to evaluate the system as a black box: the system’s response can be observed as it receives various inputs, but this offers limited insight into the internal operations of the system. The ability to accurately model the system would enable much more effective independent verification and validation of its operation.

Approach

The project modeled the computer vision system using a Generative Adversarial Network (GAN), a specialized neural network architecture. The GAN generated inputs for the vision system and evaluated its responses as random noise was introduced. The neural network approximated the vision system by contrasting its own response with that of the test system. The approximation enabled researchers to gain a deeper understanding of the black box system, and to identify scenarios where the vision system failed to produce accurate results.

Accomplishments

The project was able to approximate the vehicle system well enough that the neural network could generate images which caused pedestrians to be misclassified.

Figure 2: (Video) This video shows a mannequin behind a computer-generated image. In the foreground, the vehicle display initially identifies the manikin as a pedestrian. However, the generated image causes the detection system to vacillate and ultimately to miss the identification entirely.