Background

There is a current initiative to develop an automated race analysis system to enhance performance insights through precise data collection and advanced analytics in competitive swimming. The objective is to create a system that provides high-accuracy, near real-time data for athletes, coaches, and analysts. In response to this initiative, our research focused on developing and validating a technical approach that accurately captures metrics such as stroke count and stroke rate from race videos.

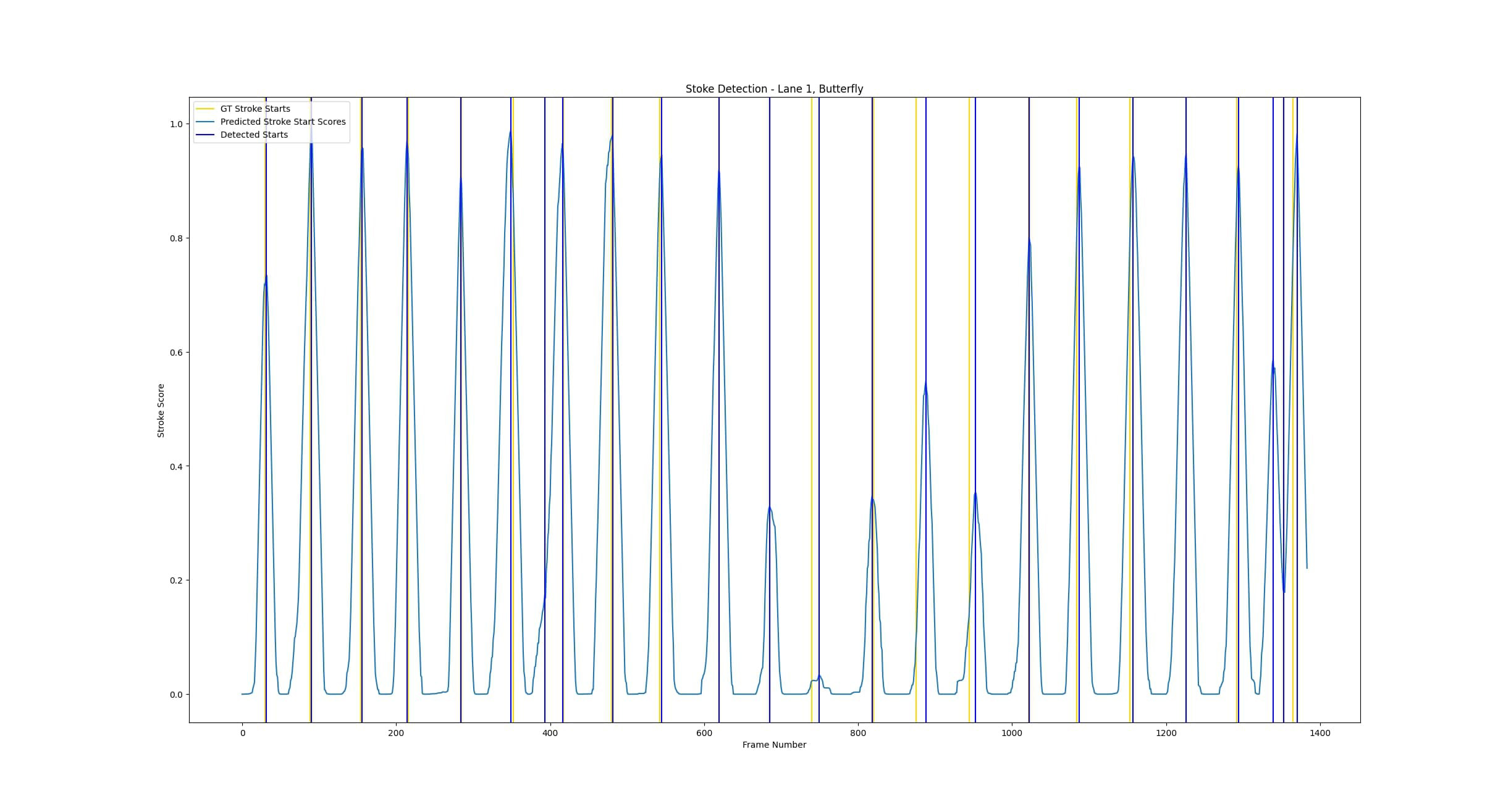

Figure 1: Stroke detection results for the butterfly stroke.

Approach

The objective of our project was to create a system capable of automatically analyzing swimming race footage to provide detailed performance data. This system aims to give athletes, coaches, and analysts high-accuracy, near real-time insights into specific metrics like stroke count and stroke rate, thereby enhancing performance evaluation and training.

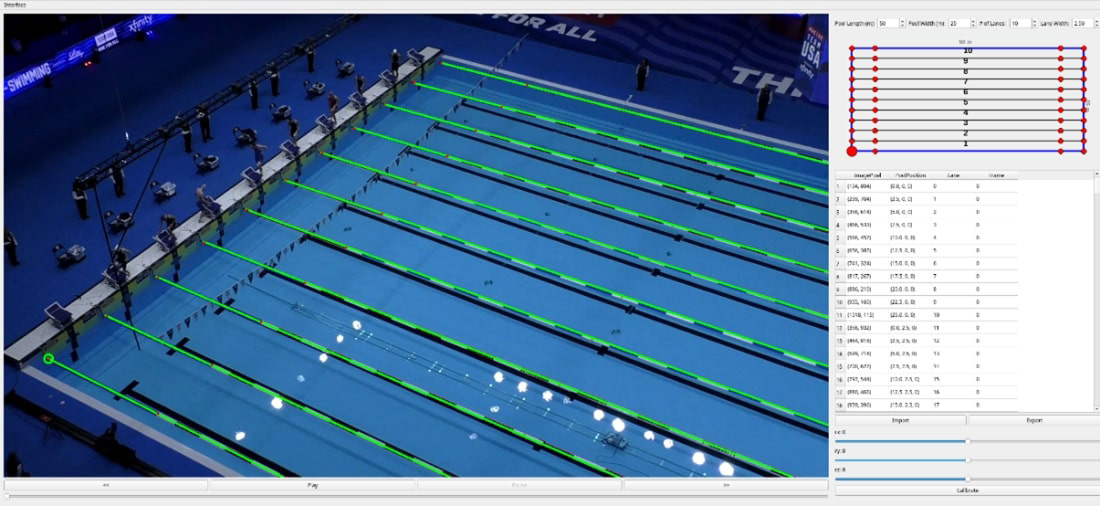

To achieve this, we developed algorithms for tracking swimmers and identifying individual strokes from race videos. We tracked swimmers in each video and identified their specific strokes using a spatio-temporal neural network based on a multi-scale vision transformer architecture, pretrained and then further trained with semi-supervised methods. Additionally, we created a calibration procedure to align video observations with the physical pool environment using known Olympic pool dimensions and landmarks, supported by a graphical interface to define key points and account for camera position variations. By leveraging pool lane floats as markers, we ensured accurate tracking, facilitating a virtual top-down view of the pool to refine swimmer tracking accuracy.

Figure 2: Pool calibration with projected lanes.

Accomplishments

The project successfully demonstrated the feasibility of an automated system for precision swim metrics. Our stroke detection algorithm achieved 89.2% accuracy in detecting all Olympic swimming strokes, with high precision and a low rate of false positives, essential for reliable stroke counting. Initially designed for a static camera setup, our calibration tool was adapted to handle moving camera data by estimating frame-to-frame transforms. Though panning and zooming errors presented challenges, using pool lane floats as reference features improved stability.

The stroke detection approach developed in this project can be adapted for various applications requiring automated event detection. The backbone feature extractor was trained on diverse human activity data and can be applied to domains such as manufacturing, health monitoring, and security. This versatility demonstrates that our technical advancements in stroke detection and calibration can extend beyond swimming to other fields, where precise movement identification and tracking are crucial for performance monitoring and analysis.