Background

This project was undertaken to enhance three-dimensional (3D) reconstruction capabilities using diffusion models conditioned on two-dimensional (2D) image data. The aim was to address the limitations of traditional computer vision models by leveraging diffusion models, a class of generative models that iteratively transform noise into structured data. This approach provides an innovative solution to generative tasks and solving inverse problems in perception, potentially improving the accuracy and efficiency of 3D reconstructions from 2D images.

Approach

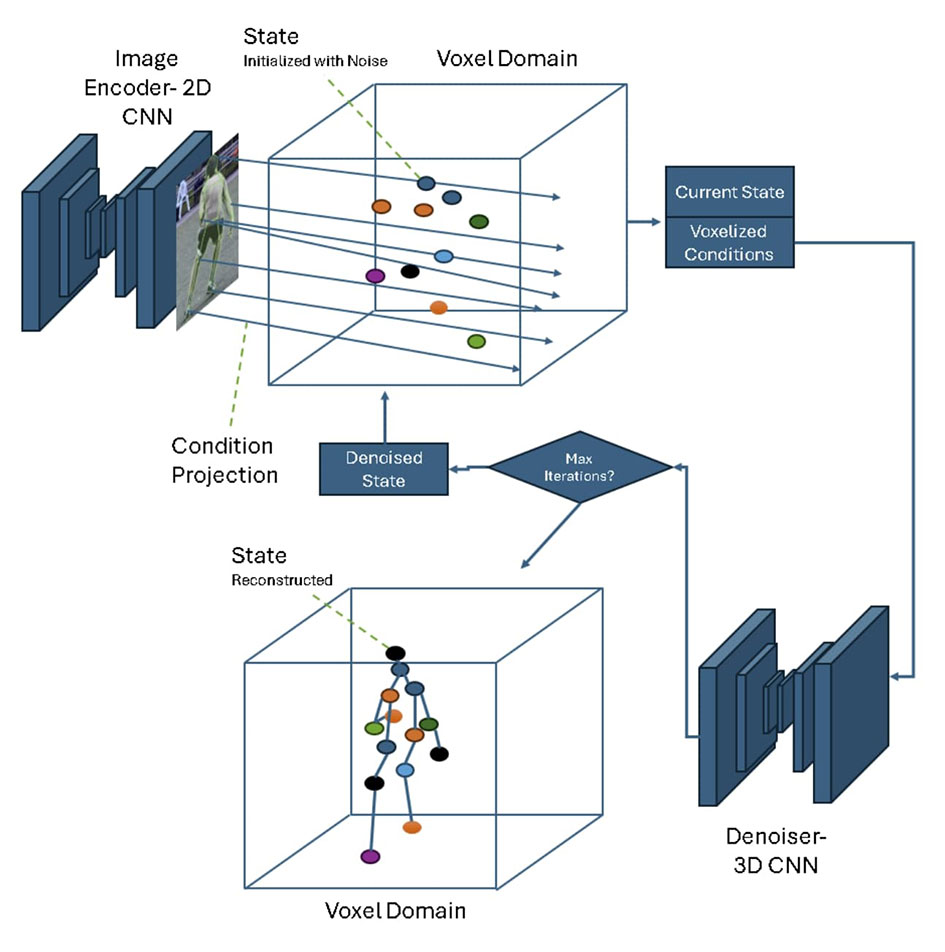

The project’s objective was to combine existing SwRI computer vision models' 2D outputs into a multichannel volumetric representation to reconstruct a 3D state from a single 2D view. The methodology involved developing a novel architecture based on ray casting. Initially, 2D information was extracted from an image encoder. These 2D feature vectors were then projected through a voxel domain, creating a vector field aligned with the camera’s projective geometry. This vector field was effectively a collection of features that was used to condition the denoising process, ultimately achieving volumetric reconstruction.

Accomplishments

SwRI developed a method for volumetric reconstruction by taking an object silhouette image and converting it into a 3D shape. Additionally, the project achieved pose up-conversion by transforming a 2D image into a more detailed 3D representation. These advancements demonstrate the project's success in enhancing 3D reconstruction capabilities through the innovative use of diffusion models conditioned on 2D image data.

Figure 1: Diffusion model workflow.

Resulting Project Work

10-102912